Future Of AI Chatbots: Trends, Agents, And Business Impact

Shlok Sobti

Future Of AI Chatbots: Trends, Agents, And Business Impact

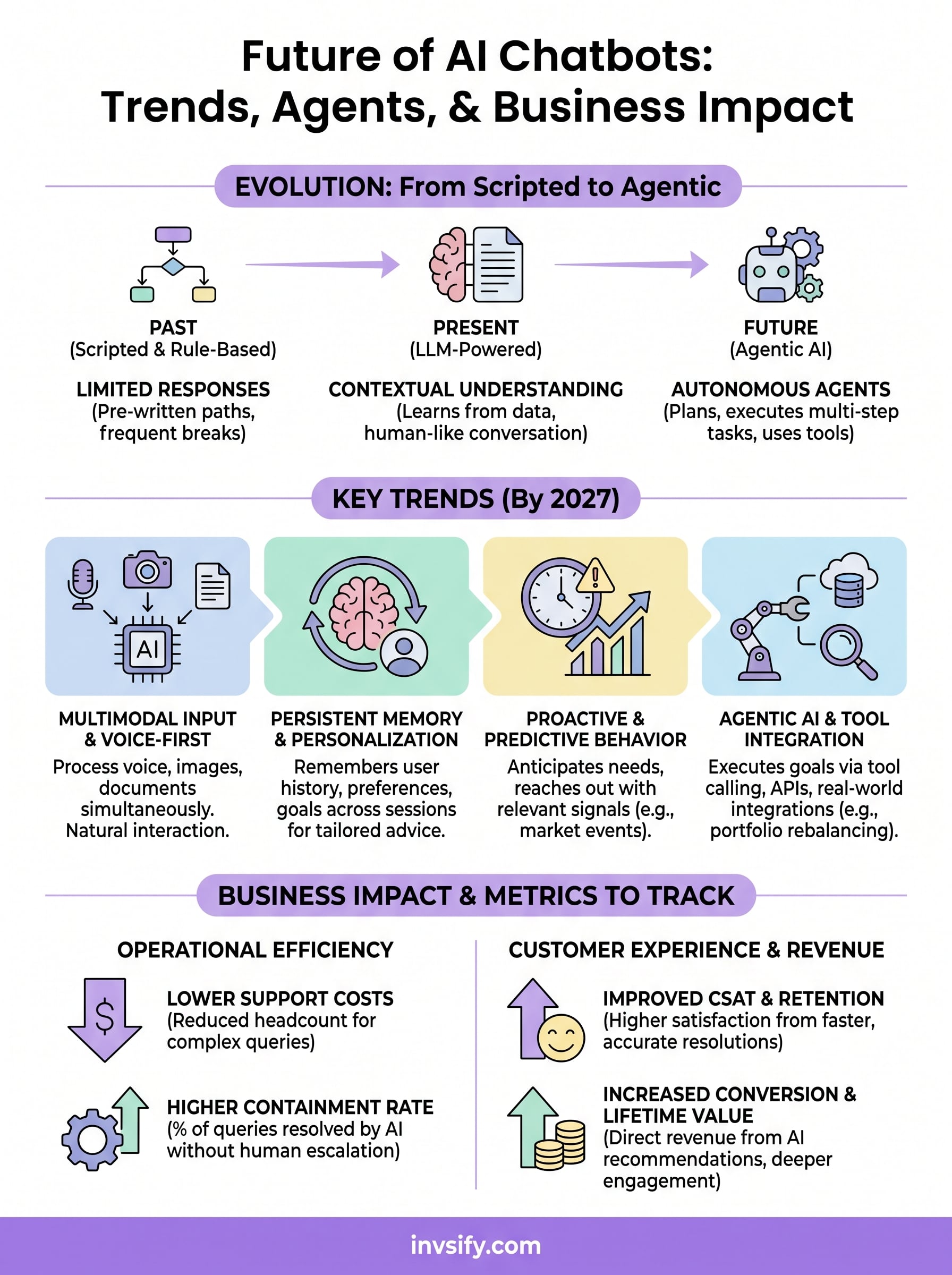

AI chatbots have moved well beyond scripted responses and basic FAQ handling. In just a few years, they've become capable of holding nuanced conversations, processing complex queries, and even making autonomous decisions. The future of AI chatbots points toward something far more ambitious, systems that don't just respond but anticipate, reason, and act on behalf of users across industries, from healthcare to personal finance.

This shift matters especially in sectors where trust and accuracy are non-negotiable. At Invsify, we've built our SEBI Registered AI-powered advisory platform around this very evolution. Our Conversational RM AI already provides 24/7 multilingual financial guidance, portfolio insights, and personalized recommendations, but what's coming next in conversational AI will reshape how millions of Indians manage and grow their wealth.

So where exactly are chatbots headed? What separates a chatbot from an AI agent, and why should businesses, particularly in financial services, pay attention? This article breaks down the major trends driving chatbot evolution, from agentic AI and multimodal capabilities to hyper-personalization and regulatory considerations. You'll also get a clear picture of how these advancements are already influencing business operations, customer experience, and investment advisory. Whether you're a product builder, a business leader, or an investor trying to understand the technology behind the tools you use, this is what you need to know.

Why AI chatbots are changing so fast

The pace of chatbot evolution isn't accidental. Three converging forces, working simultaneously, have compressed what would have taken decades of incremental progress into just a few years. Understanding these forces helps you see why the future of AI chatbots looks so dramatically different from what you encountered even three years ago, and why that gap will keep widening as each force amplifies the others.

The large language model breakthrough

For most of their history, chatbots ran on rule-based systems where developers had to manually script every possible conversation path. These systems broke down the moment a user asked something slightly unexpected. That changed fundamentally when large language models (LLMs) entered the picture. Models like GPT-4 and Google's Gemini learned from billions of text examples, giving them the ability to understand context, infer intent, and generate coherent, human-like responses without needing pre-written scripts.

The shift from rule-based bots to LLM-powered systems is arguably the single largest leap in conversational AI history, comparable to moving from manual calculators to modern computers.

Developers no longer had to anticipate every possible question. Instead, they could train a model on vast domains of knowledge and let it handle natural variation in how people communicate. For businesses, this reduced deployment complexity significantly and opened chatbot use to genuinely complex domains like medical triage, legal assistance, and investment advisory, areas where rigid scripts simply couldn't function before.

Compute power and data availability

Behind every capable AI model sits an enormous amount of raw compute power. The rapid improvement of GPUs and the expansion of cloud infrastructure, driven by companies like Microsoft Azure and Google Cloud, made it economically viable to train and run models at a scale that was impractical just five years ago. You can now access a model capable of holding a sophisticated financial conversation through a standard API call, something that would have required a dedicated data center back in 2018.

Data availability compounded this effect. The internet generated trillions of data points across text, voice, images, and structured records, giving models a rich training foundation. When you combine that breadth of data with improved training techniques like reinforcement learning from human feedback (RLHF), the result is a chatbot that continuously improves based on real interactions rather than static rule updates.

User expectations raised the bar

Technology rarely changes in isolation. As LLM-powered tools became widely accessible through consumer apps, user expectations shifted rapidly. People who experienced a genuinely helpful AI assistant in one context began demanding the same quality everywhere, including from the businesses they rely on for banking, insurance, and financial planning. What once felt impressive became the baseline.

This created competitive pressure across every sector. Companies that deployed outdated, script-based bots faced growing friction from users who already knew what better looked like. The result was a rapid adoption cycle where businesses upgraded their conversational AI infrastructure far faster than they would have under normal market conditions, further compressing the timeline of chatbot evolution and raising the floor for what counts as an acceptable user experience.

Trends that will define AI chatbots by 2027

The future of AI chatbots isn't one single breakthrough; it's a cluster of parallel trends converging at the same time. By 2027, you'll interact with systems that don't just understand your words but process your tone, anticipate your needs, and remember your history. Knowing which trends to watch helps you position your business or personal financial strategy for what's already in motion.

Multimodal input and voice-first interaction

Today's chatbots primarily process text. Tomorrow's will process voice, images, documents, and data simultaneously. Multimodal AI models, like those built on Google's Gemini framework, can already analyze a photograph, read a financial statement, and hold a spoken conversation within the same session. For you as a user, this means far more natural interactions where you don't need to reduce your query to a typed sentence.

Voice-first design will accelerate this shift, particularly in mobile-heavy markets like India where voice input often feels faster than typing. Financial platforms will let you speak naturally about your portfolio performance, hear a spoken summary of your returns, and receive real-time guidance in your preferred language, all without opening a single spreadsheet.

Hyper-personalization through memory systems

Most current chatbots reset between sessions. The next generation will carry persistent memory across every interaction, building a continuously updated model of your preferences, goals, and financial behavior. Rather than answering generic questions, these systems will reference your past decisions and adapt recommendations specifically to your context.

Memory-enabled AI chatbots will shift the experience from answering questions to genuinely knowing the person asking them.

That means a financial chatbot won't just explain SIP options in general terms. It will know your existing portfolio composition, your risk tolerance from previous conversations, and flag a new opportunity that fits the specific goals you mentioned six months ago.

Proactive and predictive behavior

The biggest shift coming by 2027 is the move from reactive to proactive chatbot behavior. Instead of waiting for your query, AI systems will monitor relevant signals and reach out when something requires your attention, whether that's a market event, a tax deadline, or a portfolio rebalancing opportunity.

Predictive capability depends on connecting chatbots to live data streams and analytical models. Platforms that invest in this infrastructure now will secure a significant head start, especially in financial services where timing directly affects outcomes.

How modern AI chatbots work as agents

The term "agent" gets used loosely, but it has a specific technical meaning in AI. An agentic AI chatbot doesn't just generate a response and stop. It plans a sequence of steps, uses tools, evaluates its own output, and keeps going until it completes a goal. This is a meaningful departure from the simple request-response model that most people associate with chatbots, and it's central to understanding where the future of AI chatbots is actually headed.

From single-turn responses to multi-step tasks

Traditional chatbots handle one exchange at a time. You ask, they answer, and the interaction effectively resets. Agentic systems maintain a goal across multiple steps, breaking a complex task into sub-tasks and executing each one in sequence. Think of the difference between asking for directions and hiring a driver. One gives you information; the other takes action on your behalf until you reach your destination.

Agentic AI shifts the chatbot's role from information dispenser to autonomous task executor, which changes the entire calculus of what you can delegate to a machine.

This architecture relies on a planning layer that sits above the language model itself. The model generates a plan, evaluates whether each step succeeded, and adjusts if something fails. For example, if you ask an agentic financial assistant to rebalance your portfolio within a specific risk threshold, it won't just explain how rebalancing works. It will analyze your current holdings, calculate the required trades, and flag anything that needs your approval before execution.

Tool use and real-world integrations

Agents extend their reach through tool calling, the ability to invoke external systems like databases, APIs, calculators, or web search within a single task run. Rather than being limited to what's stored in its training data, the chatbot pulls live, structured information from connected sources and incorporates it directly into its reasoning.

Platforms like Microsoft Azure AI have built entire frameworks around tool-enabled agents, letting developers connect language models to enterprise data sources, transaction systems, and real-time feeds. For financial platforms, this means an AI that can cross-reference market data, check regulatory requirements, and personalize advice based on your actual account activity, all within a single interaction rather than across multiple disconnected sessions.

Business impact and metrics to track

AI chatbots don't just improve conversations. They produce measurable shifts in operational efficiency, revenue retention, and customer lifetime value that show up directly in your financial statements. To benefit from the future of AI chatbots, you need to know which numbers to watch and why they move.

Cost reduction and operational efficiency

The most immediate business impact shows up in support cost per resolution. Traditional customer service requires human agents for every complex query, which means headcount scales linearly with demand. An AI chatbot handles thousands of simultaneous conversations without adding staffing costs, so your cost per interaction drops sharply as volume grows. For financial services firms handling recurring queries about portfolio performance, SIP schedules, or tax implications, this efficiency gain compounds quickly.

Businesses that measure containment rate, the percentage of queries fully resolved by the chatbot without human escalation, consistently report it as their highest-leverage metric for operational cost control.

Beyond containment rate, track average handling time (AHT) separately for AI-resolved and human-resolved queries. When your chatbot resolves a query in under 90 seconds that previously took a human agent 12 minutes, that delta tells you exactly where your efficiency gains are concentrated and where to invest next in automation.

Customer experience and revenue metrics

Operational savings matter, but customer satisfaction scores and retention rates tell you whether your chatbot is actually building trust or quietly eroding it. Deploy a short post-interaction CSAT survey after every chatbot session. A well-calibrated agentic AI should score consistently above 4 out of 5, because it resolves queries fully rather than deflecting users to a human after wasting their time.

On the revenue side, monitor conversion rate within chatbot sessions for any product or service recommendation the AI surfaces. A financial chatbot that identifies a relevant investment product and guides a user through onboarding in the same session creates a direct, attributable revenue event. Tracking this separately from other acquisition channels gives you a clear return figure to weigh against your chatbot infrastructure investment.

Finally, watch session depth, meaning how many meaningful exchanges occur before a user exits. Deeper sessions signal that users trust the system with more complex questions, which is a leading indicator of long-term platform engagement and higher customer lifetime value over time.

What this means for Indian financial advice

India's investment landscape carries specific characteristics that make the future of AI chatbots especially consequential here. A large salaried workforce, rising smartphone penetration, and a regulatory framework shaped by SEBI's mandate for registered, transparent advice create the exact conditions where agentic, personalized AI delivers outsized value compared to markets where traditional advisory infrastructure has existed for generations. The gap between what Indian retail investors need and what they currently receive is wide enough that AI can close it faster than any hiring surge ever could.

The trust gap in retail investing

Indian retail investors have historically relied on mutual fund distributors who earn trailing commissions from the products they recommend. That commission structure creates a clear conflict of interest: the distributor's income grows when they recommend high-cost funds, not when they optimize your actual wealth outcome. SEBI-registered investment advisors operating on a fee-only model remove that conflict entirely, but until recently, accessing that cleaner model required either high net worth or significant effort to find the right advisor.

When AI removes the commission incentive from financial advice, every recommendation you receive reflects your portfolio's actual needs rather than someone else's revenue target.

Running your existing portfolio through a hidden fee calculator shows exactly how much you've paid in trailing commissions over time. For many salaried investors, those cumulative costs are large enough to meaningfully change how they evaluate where they take advice going forward.

Multilingual access across India's investor base

A first-generation investor in Tamil Nadu and a senior professional in Punjab both deserve accurate, contextually relevant financial guidance in the language they think in. Agentic AI chatbots with multilingual capability close this access gap in a way no human advisory network operating at national scale can replicate efficiently, because the AI doesn't experience fatigue, regional staffing gaps, or inconsistency between agents.

For platforms like Invsify, this means the Conversational RM AI delivers consistent 24/7 guidance across language preferences without proportionally increasing support costs. You get the same quality of portfolio insight whether you're asking about your SIP returns in Hindi or your tax-saving options in English, and that consistency at scale will compound as AI advisory adoption grows across India's salaried workforce over the coming years.

Key takeaways

The future of AI chatbots runs on three converging forces: LLM breakthroughs, cheaper compute, and rising user expectations. Those forces are pushing chatbots from reactive responders into agentic systems that plan, use external tools, and complete multi-step tasks without waiting for your next instruction. By 2027, persistent memory, multimodal input, and proactive behavior will set the baseline for every serious platform.

For businesses, the metrics that matter most are containment rate, CSAT, and conversion within chatbot sessions, because those numbers connect directly to cost savings and revenue. For Indian investors specifically, agentic AI addresses two structural problems at once: the commission-driven conflict of interest baked into traditional distribution and the language access gap that has kept quality advice out of reach for millions of salaried professionals.

If you want to see what conflict-free, AI-powered advisory looks like in practice, start your journey with Invsify and take control of your wealth today.